Web scraping is taking the world by storm, becoming super popular across various industries. A journal published by American Marketing Associations states that 59% of the 300 articles associated with the top 5 marketing journals use data scraping to capture and describe evolving marketplace realities.

This powerful technique has become the go-to method for gathering valuable data and insights from the internet. For good reasons, businesses, researchers, analysts, and individuals are all hopping on the web scraping bandwagon!

In this article, we will touch upon some fascinating 2023 real-world examples and use cases of web scraping.

By the end, you’ll get many ideas you can build yourself and better understand just how versatile web scraping is.

Let’s dive right in!

What Is Web Scraping for Price Monitoring and Comparison

Price monitoring and comparison is tracking and comparing the prices of products or services in the market.

Businesses and consumers use this strategy to stay competitive and make informed decisions. This information enables companies/service providers to maintain their market position, optimize pricing strategies, and drive more sales.

Web scrapers for price monitoring are widely used. Gathering information about the price (aka price scraping) not only fills in data gaps for researchers but also allows businesses to stay up-to-date with the competition. A startup can gain insights and make valuable marketing decisions to boost its share. If a scraped website has legal issues, such as in the Terms of Service, that site can be sued.

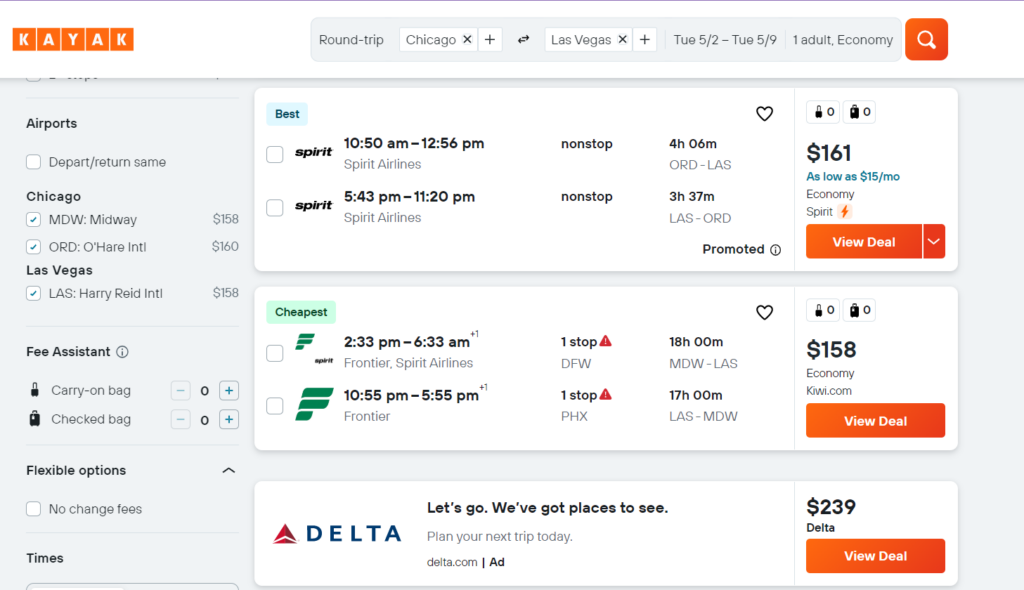

For instance, take the travel industry. Websites like Expedia or Kayak rely on web scraping to gather pricing information from airlines, hotels, and car rental companies. Web scrapers help them provide users with the best deals available.

E-commerce businesses use web scraping to monitor competitor pricing and adjust prices

accordingly.

In this study published in the International Journal of Innovative Research in Technology (here’s a PDF link), the authors talked about how Amazon became a leader in its industry by “using web scraping and continued price monitoring” to have competitive pricing.

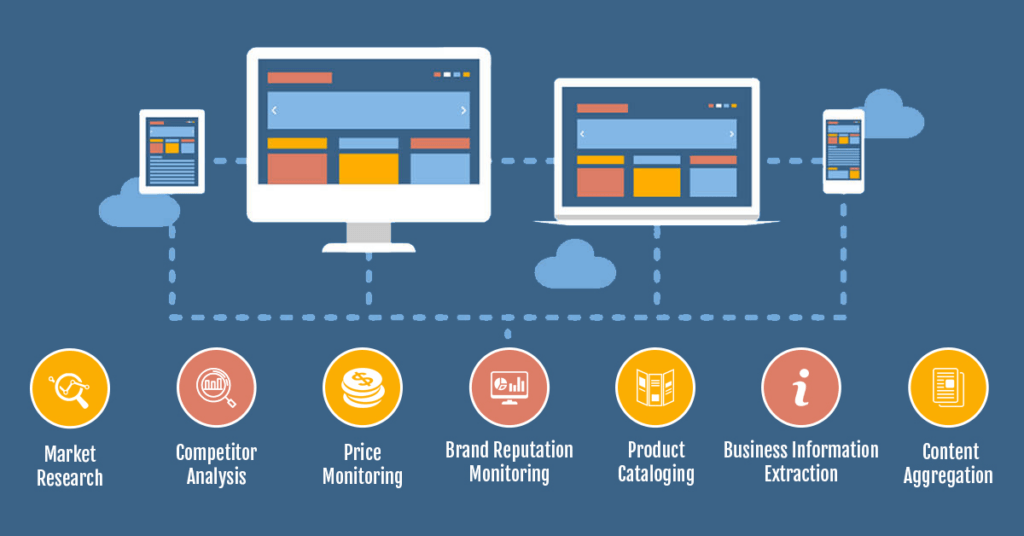

Market Research and Competitor Analysis

Let’s talk about how web scraping is a secret weapon in market research and competitor analysis.

Businesses use it to understand their target audience, identify trends, and keep up with what their competitors do.

By collecting and analyzing data from various online sources, businesses can gain insights that help them make better decisions and stay ahead.

You can collect valuable data like product descriptions, customer experience and reviews, social media sentiment analysis, marketing campaigns, website traffic, and SEO strategies. Public information can be scraped and analyzed for customer service, your individual or business needs.

Image Source: Data Entry India

Image Source: Data Entry India

One example is the fashion industry, where brands can use web scraping to analyze trending styles and colors from social media platforms, blogs, and e-commerce sites.

All this information helps them stay on top of fashion trends and tailor their product offerings accordingly.

Another example comes from the world of startups, where entrepreneurs can use web scraping to analyze competitors’ websites and social media presence, giving them a better understanding of the market and their competition.

Not only does this intel help them make their strategies, but it also lets them carve out a niche in the market.

One great example is Zara, where more than 300+ designers scrape the market to figure out the competition and stand along with the users’ actual requirements regarding their budget. This insight allowed Zara to maintain a smooth supply chain, ultimately giving it the edge over its competitors. In today’s technological age, it’s all about Big Data, which Zara seems to have mastered.

Read more from our Web Scraping Series:

- The fundamental tools, techniques, and best practices for web scraping

- Introduction to Python as a powerful language for web scraping

- Web Scraping Tools and Services: A Comprehensive Review

- Common Challenges and Use Cases in Web Scraping

Sentiment Analysis and Social Media Monitoring

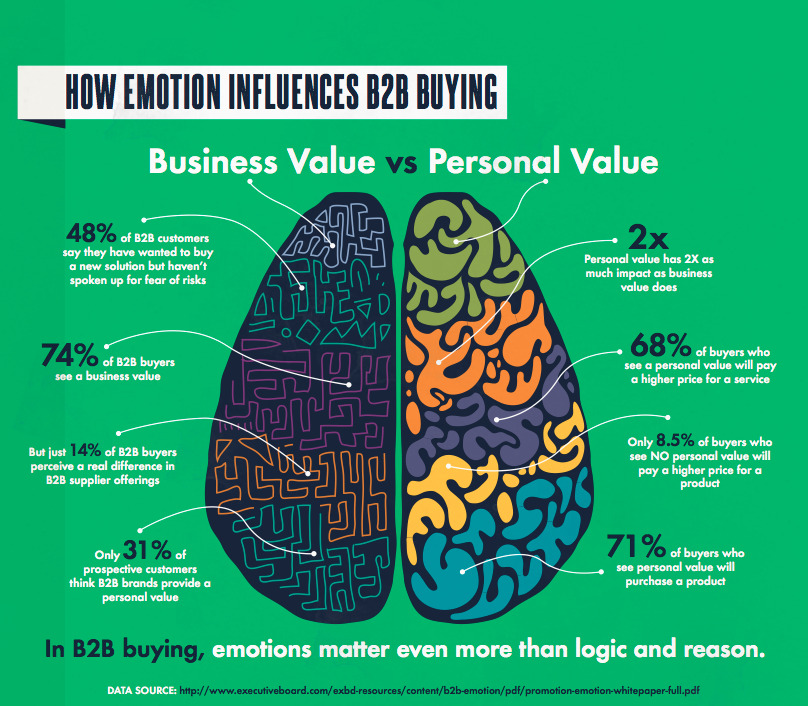

We live in the digital media age, making sentiment analysis and monitoring super important. They help businesses understand how consumers feel about their products, services, or brand in general.

Data-driven companies are estimated to be 19 times more likely to be profitable and are 52% better at understanding their customers.

No matter how you argue with it, emotions influence even the B2B market. These sentiments/emotions can be as simple as seeing a personal benefit or loyalty to a company.

Image Source: Business2Community

Image Source: Business2Community

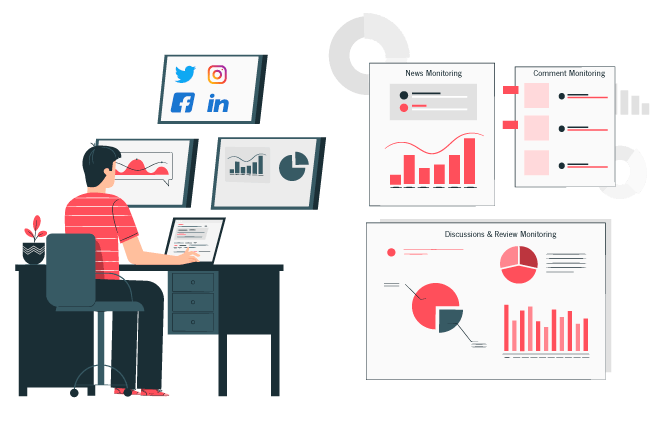

Companies can gain valuable insights and address customer concerns by being active on social media platforms, forums, and review sites.

Web scraping can help you collect and aggregate data from various social networking site sources, such as tweets, Facebook posts and short videos, Instagram stories and comments, and reviews on websites like Yelp or Amazon. You can also use social listening tools for popular social media platforms to gain new insights in real time.

Once you gather this data, use natural language processing techniques to analyze the sentiment and identify trends or patterns.

Image Source: Xbyte

Image Source: Xbyte

The use of sentiment analysis and social media monitoring is widely popular in the entertainment industry.

Movie studios and streaming services can use web scraping to collect user base reactions and reviews from social media and other platforms. With all this intel, they can gauge the success of a movie or TV show and make better decisions for future projects.

Another example comes from the world of politics, where web scraping can be used to monitor public sentiment and opinions on social media. This information can help political campaigns adjust their messaging and strategies, work on PR, and attract more followers.

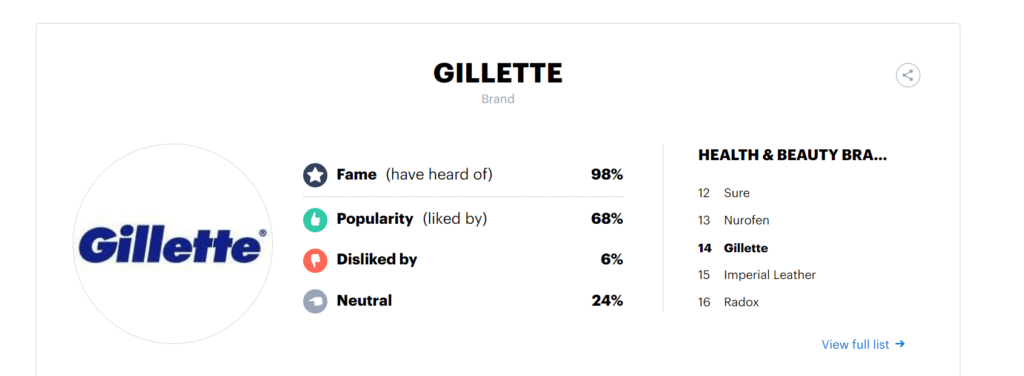

Let’s take the example of Gillette.

Here is Gillette’s YouGov BrandIndex Score showcasing the consumer’s sentiment towards the company:

Image Source: YouGov

Image Source: YouGov

After experiencing negative feedback on one of its videos in 2019, its YouGov BrandIndex buzz score dropped by about 6 points. Gillette had to do something to build trust again. They carried out a full-fledged sentimental analysis and launched a new campaign called “#MyBestSelf.”

This helped them tackle the negative buzz score and gave them a positive public reaction.

Job Aggregation and Talent Sourcing

Job aggregation is all about collecting job listings from various sources and consolidating them in one place, making it easier for job seekers to find opportunities.

On the other hand, talent sourcing is when recruiters and HR professionals use web scraping to find potential candidates by collecting data from job boards, social media platforms, and professional networking sites.

If you are a recruiter, you can quickly identify and reach out to potential candidates, streamlining the recruitment process and increasing the chances of finding the right fit for open positions.

Image Source: SmartRecruiters

Image Source: SmartRecruiters

A classic example of web scraping is job search websites like LinkUp, which use web scrapers to aggregate job listings from various company websites, job boards, and other sources. This way, job seekers can access a wide range of opportunities all in one place.

It’s also interesting to note that many job platforms like Indeed started as job aggregators and later expanded into the job board we know today. However, it still uses some web scraping to grow further.

Another famous example is LinkedIn, which, besides its networking features, can be used by recruiters to scrape user profiles for relevant skills, experience, and qualifications.

Hired is also an online marketplace connecting tech experts with hiring companies.

The website uses job aggregation, as described above, to build its database of jobs and candidates.

Machine learning is responsible for matching these jobs/requirements with the qualifications and preferences of their potential candidates. Combining these two powerful tools allows the website to provide fast and effective service to employers and workers.

News Aggregation and Content Curation

In today’s world, we are constantly bombarded with information of all sorts, and here the need for news aggregation and content curation emerges. You don’t have to read endless articles and sources to get the desired information.

By consolidating news stories, blog posts, and other content into a single, organized platform, you can quickly access the information you seek.

Web scraping is a fantastic tool for gathering and organizing content for news aggregation and content curation. Web scrapers automatically extract headlines, article summaries, and other relevant data from various news websites, blogs, and other online sources.

![]()

Once the data is collected, you can organize and present it, making it easy for people to stay informed about their interests.

A well-known example of content curation platforms is Google News, which uses web scraping to aggregate news articles from different sources based on users’ preferences and interests.

Doing this provides a personalized news experience, making it easier for users to find and consume the content that matters to them.

Another example is platforms that curate content, like Pocket and Flipboard. They use web scraping to collect articles, blog posts, and other content from various sources. These platforms allow users to save, organize, and share the most engaging content, creating a personalized reading experience.

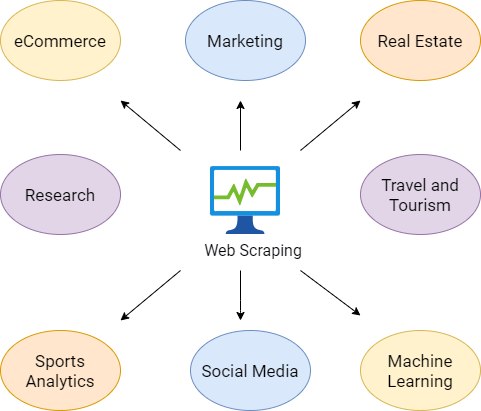

Other Notable Use Cases and Examples

Web scraping has many applications beyond the ones we’ve already discussed. Let’s briefly touch on a few more notable use cases:

Lead Generation

Businesses can use web scraping to collect contact information and other relevant data about potential customers from online directories, social media platforms, and websites.

Targeted marketing campaigns and new leads are created using this intel.

Academic Research

Researchers can use web scraping to gather data from online sources like news websites, scientific journals, and social media platforms for various purposes.

This can help them identify trends, analyze patterns, and draw conclusions that can contribute to their field of study.

Real Estate

Web scraping collects information about property listings, market trends, and pricing data from various real estate websites.

This information can help real estate professionals make informed decisions about property investments and better serve their clients.

Stock Market Analysis

Financial analysts can use web scraping to gather data from financial websites, news articles, and social media platforms to analyze stock market trends, monitor company performance, and make data-driven investment decisions.

Machine Learning and Artificial Intelligence

Web scraping can gather vast data for machine learning models and AI algorithms.

This data can help train these models to perform tasks like image recognition, natural language processing, and recommendation systems more accurately and efficiently.

Image Source: Web Harvy

Image Source: Web Harvy

These examples only scratch the surface of how vast the uses of web scraping are across different industries and applications.

Conclusion on What Is Web Scraping in 2023

As we’ve seen, web scraping is an incredibly versatile and effective tool that spans various industries and applications.

From price monitoring and market research to sentiment analysis and content curation, web scraping has proven valuable in collecting and organizing data from the vast digital landscape.

The potential for web scraping to drive data-driven decision-making cannot be overstated. With the power to collect and analyze large amounts of data quickly and efficiently, businesses and individuals can make more informed decisions, identify opportunities, and stay ahead of the competition. Data is king today, and web scraping is crucial for harnessing its power.

So, what are you waiting for?

We encourage you to explore the possibilities of web scraping in your respective fields. Whether you’re looking to gain insights, improve your processes, or stay informed, web scraping might be the solution to level up your game in our increasingly connected and data-driven world.

Stay tuned for more and download GoLogin to scrape even the most advanced web pages yourself!Hey there! At GoLogin, we are constantly working to improve our app and add new features for your better browsing experience.

Here’s a 3-minute recap of what we made better:

1. Community Forum – ask anything and share your experience ⚡️

We believe with your help and feedback we can grow together. Feel free to ask questions and share your experiences in our Community Forum to sharpen your skills and knowledge.

You can leave comments under articles as well!

2. GoLogin Parsing Tool – import accounts faster ⚡️

Forget the routine of creating accounts! Just drag and drop your TXTs or CSVs into Parser.online to parse and import profiles into GoLogin.

At this moment, it’s a beta. That’s because we’re working on implementing parsing features right into GoLogin Import – so you don’t have to waste time switching between multiple apps or tools. Keep an eye on our next updates!

3. Affiliate Payments Cabinet – keep track and withdraw your earnings ⚡️

Monitor your Affiliate Payments and withdraw rewards in one place. The cabinet is available both in the app and web version.

Also, your monthly affiliate earnings will be emailed to you once a month to keep you informed about your rewards.

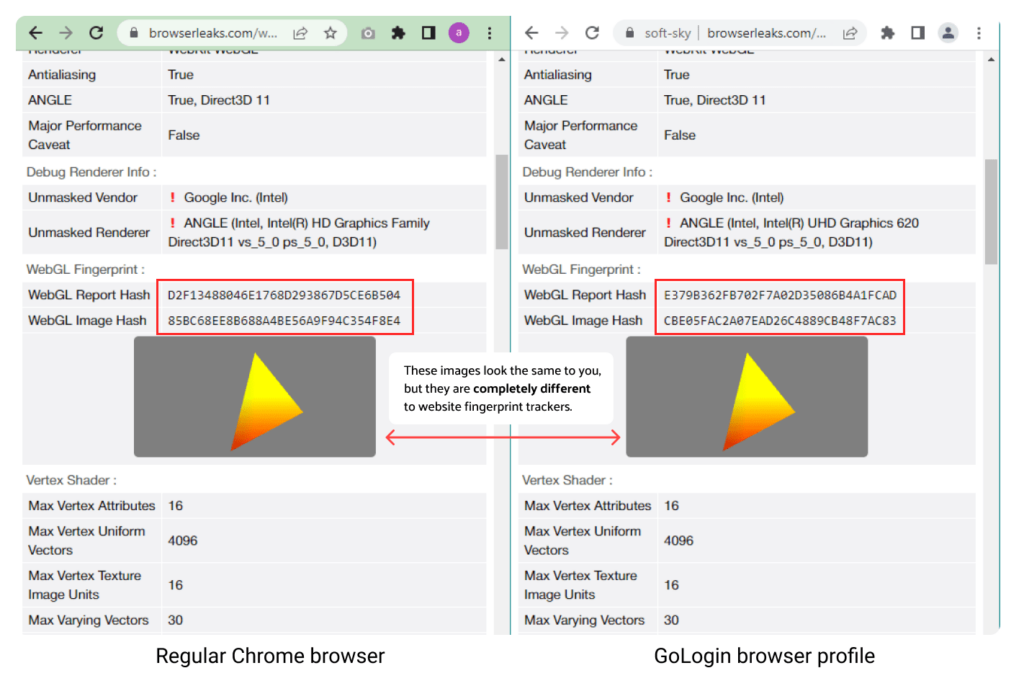

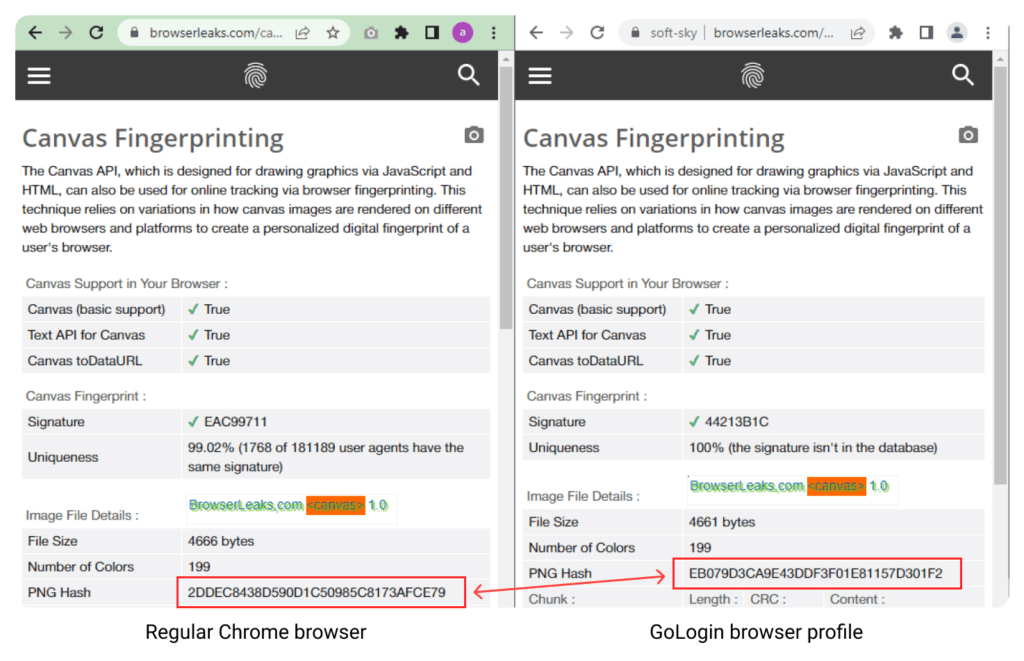

4. Noise in Canvas / WebGL algorithm updates – you data is protected ⚡️

We’ll explain a bit how this one works.

WebGL and Canvas are browser APIs for rendering graphics (3D and 2D). They expose information about the user’s graphics hardware to website trackers.

Every device existing out there will render one image in a unique way (code-wise), although these differences are invisible to your eye. Because the image rendering information is unique, it’s useful for generating a browser fingerprint.

Curious about what your device fingerprint contains? Take a look.

Using custom mathematical algorithms, GoLogin adds “noise” to your WebGL and Canvas rendering data, making the image drawn by your hardware look both unique and normal in every browser profile you run in GoLogin – keeping them protected from tracking.

Again, these changes can’t be seen (as shown on screenshots), but the image hash will be different.

So, thanks to WebGL/Canvas Noise, all of your GoLogin profiles are completely unique for your device, but also normal compared to others out there – they look like millions of other regular internet users. Modern website tracking engines learn and evolve all the time as well, so we constantly update these algorithms for your best browsing experience.

5. Orbita v109 will launch by default on Windows 8.1 and older – better OS compatibility ⚡️

We recommend updating your device to a newer Windows version for optimal browsing experience and better performance. However, if you use older Windows versions, Orbita 109 will be used for better OS compatibility.

In our latest release (3.2.18 Jupiter) we have also improved the flow of choosing Orbita version in profile settings for older Windows versions.

You can manually switch the Orbita version when creating new profile in Advanced settings tab.

You can manually switch the Orbita version when creating new profile in Advanced settings tab.

Last, but not least: NPM update

In our latest GoLogin release, we’ve also updated npm to v2.0.11, fixing problems with geolocation and bookmarks.

Using GoLogin for work? We’ll be glad to hear your feedback and feature suggestions on our social media! Feel free to mention @gologinapp on Twitter to share your work insights and experience 🙂

Download GoLogin here and check out all the new features yourself!